BellHouse is a sound sculpture that uses visual data (moving images) and translates that into the sound of 34 ceramic bells struck by robotic beaters.

It can receive any moving image file ( from a .GIF to a .MOV and most things in between) and also can capture live data from a webcam. This is what it was originally created to do in order to capture the movement of the presenting scientists at the EUPORIAS General Assembly in 2016.

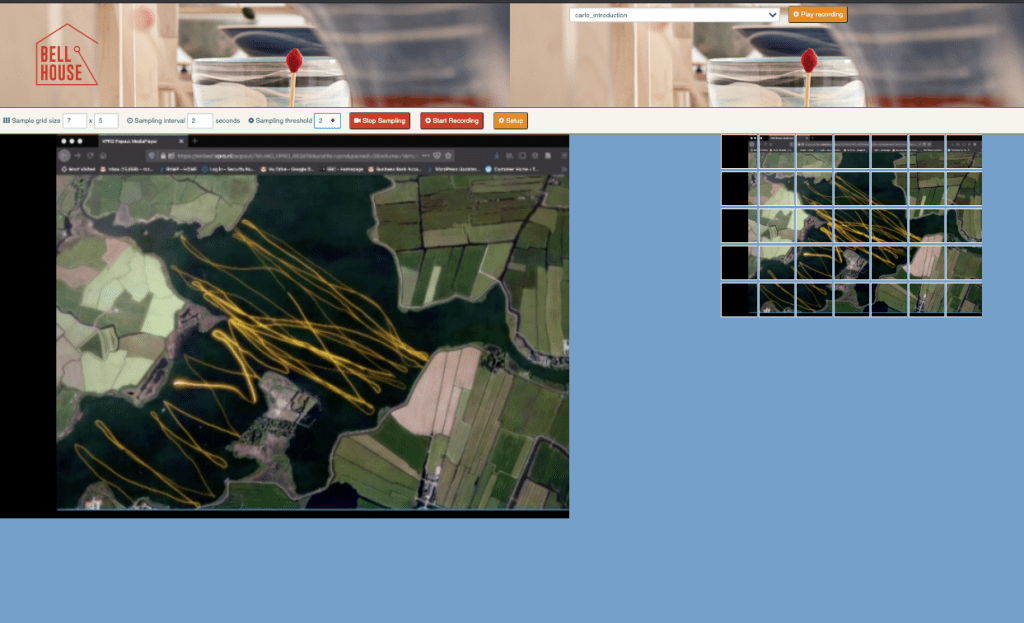

This information, live or pre-recorded, is fed through software which divides the image frame into a grid of squares or rectangles.

In each part of the grid the software looks for colour change (RGB) over a given period of time. If there is enough of a change in any of the parts of the grid in the grid, it will send a request over the internet to a second computer in the sculpture to strike the bell that corresponds to the part of the grid where the colour values have changed. This can be one part of the grid, several of them, or all of them.

There are 2 main variables in the motion capture software; the sampling interval and the sampling threshold.

Sampling Interval

BellHouse can sample the information given at any speed from every half a second to every 10 seconds or longer. It generally works best from between 1.5 to 3 seconds as the mallets need 1.5 seconds to reset themselves properly and longer than three seconds can set a very slow pace of change, but also, because BellHouse records total colour value change during this period, you tend to get a more universal striking of bells in a slower setting.

Sampling Threshold

This variable will allow the user to change the setting for the amount of colour change that needs to take place before a request is sent to the Raspberry Pi to strike a bell. This can help to find an optimum strike rate and gives the user some flexibility to find a sound or a pattern in the sounds.

Format

It can be helpful when playing the BellHouse to think about the format of your data. We will often experiment with resizing the moving image to make sure that we can get the most movement (colour change) out of the sampled material, but it is also useful to think of different ways of presenting your data that might give a more precise response. An example of this is a submission by Met Office scientist Mark MacCarthy which you can see Here. He has used the data points in the original feed and turned them into a grid to more accurately fit into the grid that BellHouse works from.

For further examples of how climate data can be played have a look at some of the posts from the EUPORIAS installation here.

Lastly, BellHouse can also record any data that that is played through it as it’s own data file. This means we can play it back at a later date and capture an audio or video recording if it is not possible to do this at the time of the interaction itself (if live).

For the Climateurope Webstival in 2020 we plan to play BellHouse live over the internet to the conference. This time we will be using a split screen technique so you will be able to see the motion capture software working and, at the same time, BellHouse responding to it in real time as a livestream.

To see a demonstration of how BellHouse plays “The Climate Spiral” by Ed Hawkins, have a look here.

Hi, this is a comment.

To get started with moderating, editing, and deleting comments, please visit the Comments screen in the dashboard.

Commenter avatars come from Gravatar.